After creating ELIZA, the first chatbot, Joseph Weizenbaum spent decades warning that artificial intelligence rests on a flawed vision of human nature.

A simulated therapy session with ELIZA, the 1960s computer program that pioneered chatbot technology—and revealed how easily humans project meaning onto machines. Public domain

User: Men are all alike.

Chatbot: In what way?

User: They’re always bugging us about something or other.

Chatbot: Can you think of a specific example?

User: Well, my boyfriend made me come here.

Chatbot: Your boyfriend made you come here?

User: He says I’m depressed much of the time.

Chatbot: I am sorry to hear you are depressed.

One might easily infer that this exchange occurred recently between ChatGPT and a human user. But that inference would be false. This conversation actually took place in the 1960s between a computer program called ELIZA and a human. This precursor to ChatGPT—which had modest capabilities in comparison yet was still able to generate a fluid simulated conversation with a human interlocuter—already existed long before the turn of the century. Today, ELIZA is recognized as the first chatbot, the great-grandmother, if you will, of today’s large language models, such as ChatGPT.

But the irony of ELIZA’s story is that its programmer—much like Mary Shelley’s Dr. Frankenstein—quickly recognized the danger lurking within his creation, and he spent much of the rest of his career warning about artificial intelligence (AI). He also adamantly criticized the technological and posthuman worldview championed by some of the pioneers of AI science.

The programmer’s name was Joseph Weizenbaum (1923–2008). He was a German scientist who fled Nazi Germany in his youth, and he was a significant figure in the early days of the artificial intelligence community—a man who would nevertheless become one of its greatest critics.

ELIZA’s Origin Story

Weizenbaum published the above transcript in a 1966 journal article in which he explained how ELIZA worked. Essentially, ELIZA imitated the Rogerian style of psychotherapy in which the patient leads the conversation. The therapist interjects questions or simple statements to prompt further reflection on the patient’s part. It was ideal for a primitive chatbot that needed to be able to simulate a conversation but lacked the sophistication to generate much original text.

ELIZA scanned user input for certain keywords, such as “I,” “you,” or “mother.” Such words triggered an associated rule to spit out a sentence or question in response, often by inserting some of the user’s words into a response template. It gave the impression that the bot was actually replying to the content of the user’s statement. If the user wrote “I am sad,” the machine might reply, “How long have you been sad?” The meaning of the word “sad” was irrelevant. The chatbot simply copied the word over from the user input. It worked just as well with “depressed,” “happy,” “angry,” or any other adjective. If ELIZA didn’t pick up any keywords in a user’s statement, then it would generate a generic response, such as “I see,” or “Please go on.”

The illusion worked convincingly. In his 1966 journal article, Weizenbaum noted that some subjects were “very hard to convince” that ELIZA was not human. In fact, when Weizenbaum had his secretary test the system, she asked him to leave the room so she could continue her conversation with the “therapist” in private. Weizenbaum had stumbled upon a phenomenon that would come to be called “the ELIZA effect”: our tendency to project human qualities onto inanimate machines.

Weizenbaum saw that the temptation to attribute humanity or personality to ELIZA—and other machines—didn’t appeal only to his secretary. He grew seriously concerned when some psychiatrists trumpeted that ELIZA (or a variation thereof) might actually be useful for therapeutic purposes. Weizenbaum was appalled that anyone might trust this toy with real mental health concerns. His intimate knowledge of ELIZA’s blind, algorithmic workings made it obvious to him that it shouldn’t be treated like a real therapist. It was a system as inert and unconscious as a handheld calculator, and it had nothing to say about human life. He considered the idea “obscene.”

The disconcerting revelation of the ELIZA effect began to unsettle Weizenbaum, prompting him to reconsider the framework for the relationship between humans and technology. A 1998 interview revealed the depth and incisiveness of Weizenbaum’s thought. It outlined his critique of the mechanistic view of the universe that he thought undergirded the attempt to replace human beings with machines.

“These [AI-promoting] authors, among others, are propagating an extremely dangerous image of man at the threshold of the millennium,” he told Bernhard Pörksen in the interview.

“It is based on the idea that man is a machine that can—in principle and in the near future—be understood and decoded to correct it and improve it. The central dogma of this view of man is the idea that every aspect of life is computable, meaning it can be broken down into calculable and formalizable processes.”

AI pioneers Claude Shannon, John McCarthy, Ed Fredkin, and Joseph Weizenbaum. While some pushed the boundaries of machine intelligence, Weizenbaum later emerged as one of its most forceful critics. CC BY-NC 2.0

In the interview, Weizenbaum took aim at the emergent theory of transhumanism, arguing that it was based on a fundamentally flawed conception of human nature and reality as a whole. AI enthusiasts dreamed of a “better model” of the human being, humanity 2.0, constituting a facilitated evolutionary leap to a higher plane, even if it meant rendering humanity 1.0 obsolete. Weizanbaum worried that these thinkers and scientists were prepared, with a kind of quasi-religious instinct, to sacrifice the man of today on the altar of the man of the future. Indeed, he went so far as to call materialistic science “the predominant world religion today.”

By reducing mankind to the level of the machine and elevating the machine to the level of a god, Weizenbaum worried that AI proponents were dehumanizing people. He compared it to Nazi rhetoric in World War II.

He explained, “I believe that the essential common ground between National Socialism and the ideas of [AI theorist] Hans Moravec lies in the degradation of the human and the fantasy of a perfect new man that must be created at all costs.”

A Threat to Humanity

Weizenbaum spoke from experience, because he and his family had fled from Germany to the United States in 1935 after witnessing Nazi activity.

Yet the worship of machines and technology could be even more cataclysmic than the poisonous ideology of Nazism, because its target wasn’t one specific race, but all of humanity. At the end of the mad pursuit for the perfect human being, Weizenbaum noted, “man is no longer there.”

Weizenbaum would likely have agreed with C.S. Lewis’s point: “Human nature will be the last part of Nature to surrender to Man. … The battle will indeed be won. But who, precisely, will have won it? … Man’s final conquest has proved to be the abolition of Man.”

For Weizenbaum, a false image of the human being was more dangerous than any human weapon. It was frequently the motive for using weapons; it wasn’t itself the sword, but it was the arm that swings the sword. He saw dire peril in the equivocation of human and machine. Calling them equal ignored human dignity and respect for life, turning human bodies into mere “meat machines.”

He explained, “We can learn from the history of this century, perhaps the most brutal century, what a decisive role the image of man played in the crimes of the past, remembering that the most atrocious crimes were made possible because the perpetrators denied the humanity of the victims.”

Weizenbaum’s opponents based their hope of transcending human nature through machine intelligence on the assumption that humanity, human behavior, and human experience would eventually be completely quantified, understood, and solved—and therefore replicated and improved. On the other hand, Weizenbaum insisted that a human being and the universe that human being inhabits will never be completely explicable or “hackable.”

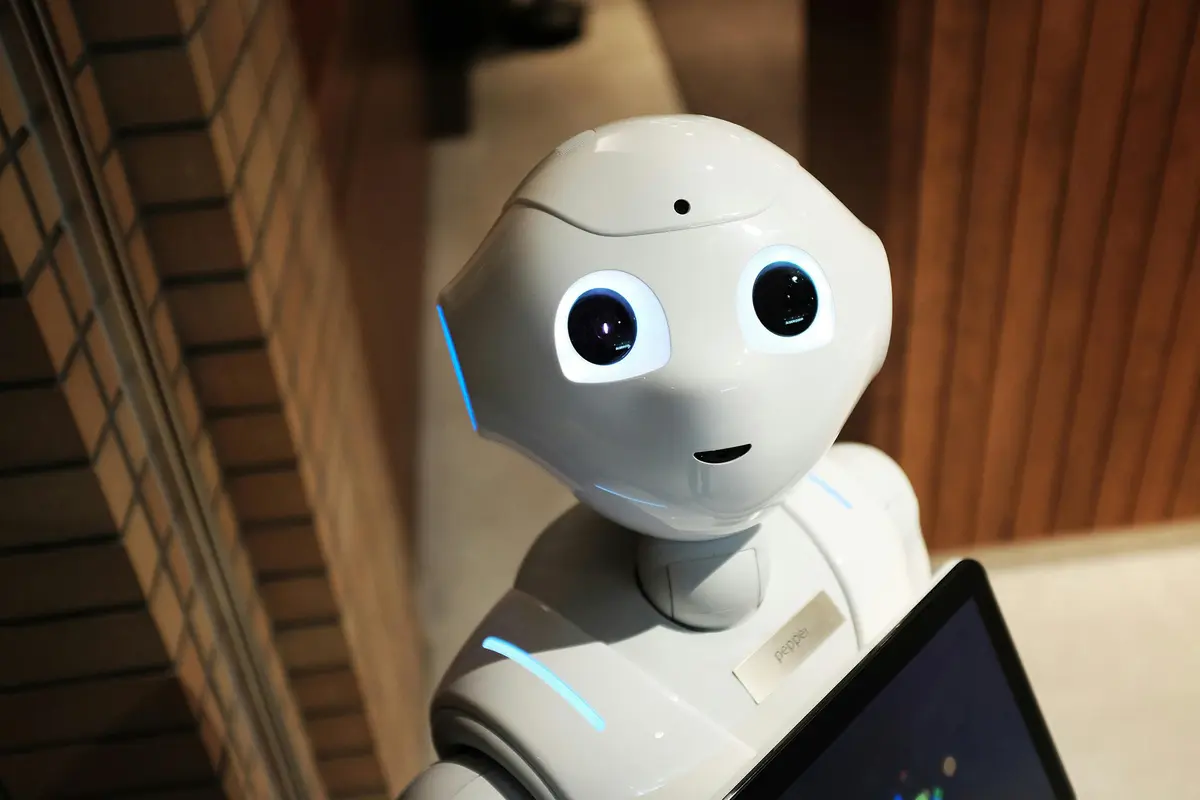

As machines became more advanced, Joseph Weizenbaum warned that the real danger isn’t just their power—it’s how we see ourselves compared to them. Alex Knight/Pexels

“The world is full of mysteries, and the credo of the AI scene that everything is calculable denies the mystery of the living,” he said. “It creates the illusion of complete transparency and suggests that all aspects of our existence can be unraveled.”

Although his warning sounded to many at the time like the backward fears of a sclerotic and out-of-touch curmudgeon, today they ring with increasing relevance.

Firm Convictions, Consistent Warnings

In the decades after ELIZA, and particularly after he published “Computer Power and Human Reason: From Judgment to Calculation” in 1976, Weizenbaum’s staunch resistance to artificial intelligence made him something of an academic and scientific outcast.

“There are certain tasks which computers ought not be made to do, independent of whether computers can be made to do them,” he wrote in the book.

Weizenbaum vocally opposed the ideas of John McCarthy and Marvin Minsky, two of the organizers of the 1956 Dartmouth workshop, which marked the birth of AI research. Both McCarthy and Minsky became pioneers of AI research, which was funded, in its early days, by the U.S. military. McCarthy castigated Weizenbaum’s book as “moralistic and incoherent,” and he and his colleagues carried on.

Weizenbaum’s warning went largely unheeded.

Here we are today, where the sophistication of our chatbots far outstrips that of ELIZA, making it easier to fall into the trap of considering these machines our equals as persons. We’ve seen people argue that chatbots have sentience, watched them fall in love with chatbots, and even seen them be convinced by chatbots to commit suicide.

Such developments would likely sadden the German scientist. Weizenbaum’s daughter, Miriam, told Smithsonian Magazine that her father “would recognize the tragedy of people attaching to literally zeros and ones, literally attaching to code.”

Weizenbaum eventually retired from MIT, where he had been a professor, in 1988 and moved back to Germany in 1996, where he found a more sympathetic audience for his views. He died in 2008.

An introspective, melancholic, and self-critical man, yet a man of firm conviction and mystical intuition, Weizenbaum recognized the wonder and mystery of the world with a certainty greater than any mathematical proof. There is something more than zeros and ones, something beyond gears and wheels and atoms. There is something in us and in our experiences that will forever elude the grasp of prediction, automation, and calculation.

Weizenbaum was sensitive to those experiences.

He told Pörksen: “There is the experience of sorrow and shock, there is the sudden joy in the morning, and there is the experience of love between people. Well, a miracle is a miracle. You cannot describe it. You would have to be an artist to approach it.”

In “Computer Power and Human Reason,” Weizenbaum described a moment of this human love and connection.

He wrote: “When my children were still small, I sometimes stood together with my wife bent over the bed in which they slept. We talked to each other without speaking; it was the repetition of a scene as old as humanity itself.”

Something in that moment transcended language, let alone algorithms, Weizenbaum argued.

“My view is indeed that there is something unspeakable, a living truth that cannot be put into words,” he wrote.

This mystical bent made Weizenbaum a hero to some, and a laughingstock to others. Yet one need not share his spiritual intuitions to appreciate his common-sense concerns. For all their technical wizardry, AIs are morally inert, lacking the sense of conscience that we all experience in our hearts. For that reason, they should not be put in positions to make moral judgments.

In Weizenbaum’s own words, “What emerges as the most elementary insight is that, since we do not now have any ways of making computers wise, we ought not now give computers tasks that demand wisdom.”

This challenge is one that we would do well to reflect on in our age.